I’ve been using pi-hole for a time now, installed on a raspberry pi 3. Time has come to change my NAS (the launch date on this NAS was late 2011). It has served me well, and I expect the same on the next one I’m buying. And the thing is… the new one comes with the “Container Station” capability, that is, running containers (LXC or Docker). And I love my pihole on raspberry, but it’s time to consolidate things. Let’s run pi-hole directly in the Qnap!

First things first, Disclaimer: I’m not responsible of what you do. This operation is almost risk free, but dns resolving may stop working, and your granny can blame you for some pages that worked before you changed things. But you have to break a few eggs to make a tortilla…

Basic things

This is pi-hole official docker image page. It has moved from the 4.x branch to 5.x recently, and the previous block lists are not compatible with the new version, so what I will do is a new installation from scratch.

All data will be persisted in a Qnap volume, so it will be protected with a raid, snapshots… up to you.

You need to install Container Station App on your Qnap as well as a virtual switch to be able to use the Bridged network as option, that will allow you to put an ip from your LAN range.

The Process

It is pretty straight process: open Container Station and go to Create (+). You will be prompted to specify a docker image from a search. Searching for pihole in Docker Hub shows some possible candidates but the official one (pihole/pihole) tends to be the first match, with lots of stars.

I already own this image so the option I can see is Create on pihole/pihole, while for the images that i don’t own I can see Install. So to continue you have to press the Install/Create option for pihole/pihole. A new window will appear asking for the version (container tag) that you want to use for your deployment. For this example we will use v5.1.2.

After a the Disclaimer window about Qnap being not liable for any consequences for third party vendor software you will be using, We finally arrive at the Create Container Window, that is where we will focus our attention now.

First Step: Name and hardware resources to assign. I’ve limited the cpu to 25% and ram to 256MB but it’m sure it will work with even less resources.

Now we go to Advanced Settings, where we will focus in:

Environment

Create the next environment variables, choosing your desired DNS upstream servers, setting the network ip where it will listen and a password to be used in the web interface. This last environment variable is optional, as if you don’t specify any password yo can still enter the web interface but you have to go to the container’s starting log and search for a password generated for you in the initialization of the service. A comprehensive list of available environment variables can be found here

| Environment Variable | Value |

| ARCH | amd64 |

| VERSION | v5.1.2 |

| DNS1 | See below |

| DNS2 | See below |

| WEBPASSWORD | optional |

| DNSMASQ_USER | pihole |

| ServerIP | your_ip |

| TZ | UTC |

As for the values of your DNS upstreams: choose two of the available listed here

Networking

As for networking, you can assign the hostname of the container. The most important thing is to change the network mode to Bridge, as thanks to the virtual switch (create it if you haven’t yet) you can assign a static ip matching the local lan where you are. Ensure that the specified ip matches with the one you have specified in environment variables.

Shared Folders

As we are on a NAS, we can persist the information on a volume so we can mantain our data when we kill our pihole container to upgrade the version (do not use the upgrade options in the container, just upgrade the whole container). We need first to create 2 folders in the Container volume

- /pihole/etc-pihole

- /pihole/etc-dnsmasq.d

And to persist the information to those folders, in Shared Folders you have to add:

| Path | Shared Folder |

| /etc/pihole | /Container/pihole/etc-pihole |

| /etc/dnsmasq.d | /Container/pihole/etc-dnsmasq.d |

After that we are ready to Create it.

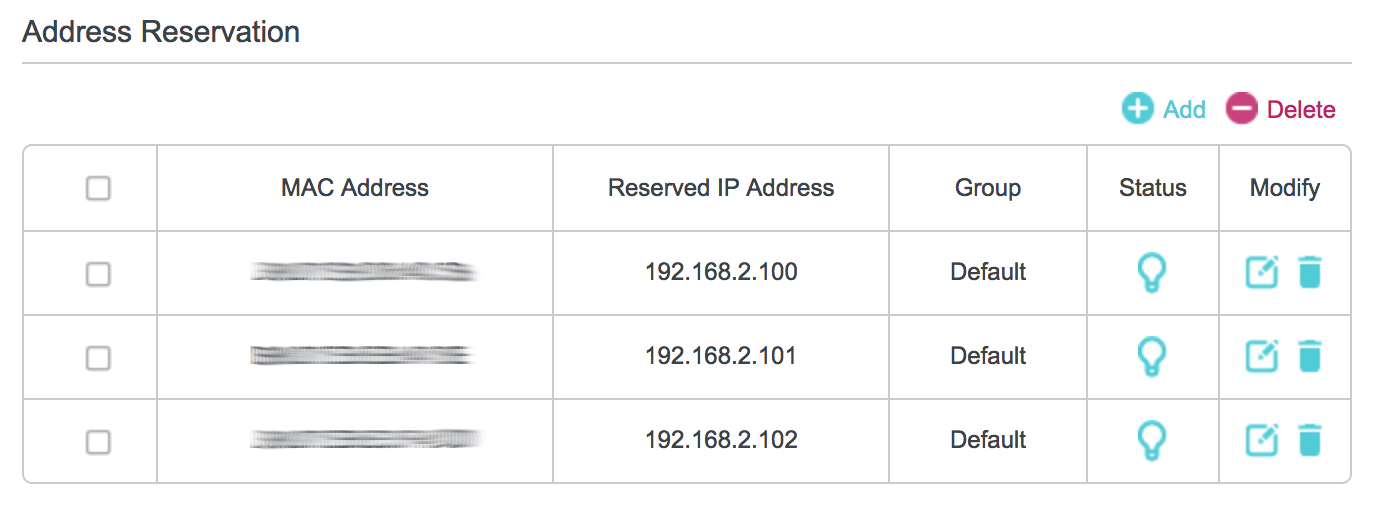

Now it’s time to configure the DHCP server from your LAN to point the DNS server option to pi-hole.

Now you can open the web interface from pihole, sit and enjoy looking at the Queries Blocked counter rising up.